Introduction¶

Backtest trading strategies or train reinforcement learning agents with

tradingenv, an event-driven market simulator that implements the

OpenAI/gym protocol.

Installation¶

tradingenv supports Python 3.7 or newer versions. The following command line will install the latest software version.

pip install tradingenv

Notebooks, software tests and building the documentation require extra dependencies that can be installed with

pip install tradingenv[extra]

Example - Reinforcement Learning - Lazy Initialisation¶

The package is built upon the industry-standard gym and therefore can be used in conjunction with popular reinforcement learning frameworks including rllib and stable-baselines3.

Example - Reinforcement Learning - Custom Initialisation¶

Use custom initialisation to personalise the design of the environment, including the reward function, transaction costs, observation window and leverage.

Example - Backtesting¶

Thanks to the event-driven design, tradingenv is agnostic with respect to the type and time-frequency of the events. This means that you can run simulations either using irregularly sampled trade and quotes data, daily closing prices, monthly economic data or alternative data. Financial instruments supported include stocks, ETF and futures.

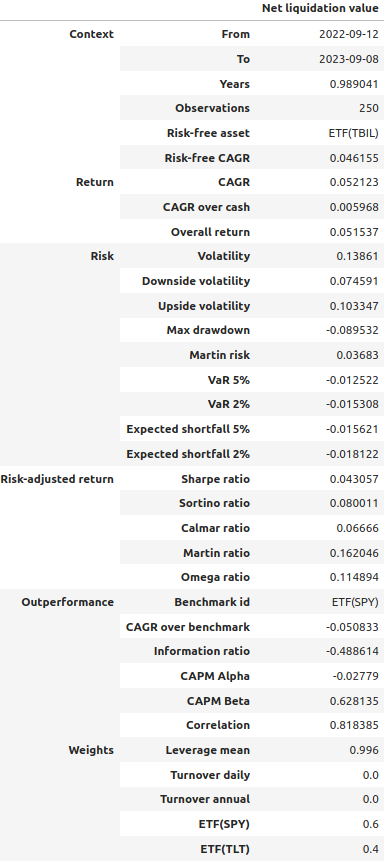

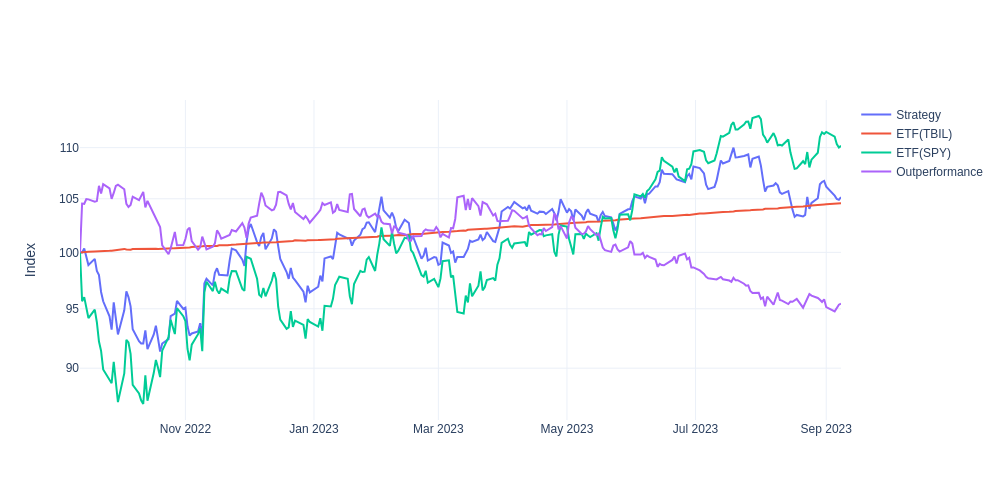

from tradingenv.policy import AbstractPolicy

class Portfolio6040(AbstractPolicy):

"""Implement logic of your investment strategy or RL agent here."""

def act(self, state):

"""Invest 60% of the portfolio in SPY ETF and 40% in TLT ETF."""

return [0.6, 0.4]

# Run the backtest.

track_record = env.backtest(

policy=Portfolio6040(),

risk_free=data['TBIL'],

benchmark=data['SPY'],

)

# The track_record object stores the results of your backtest.

track_record.tearsheet()

Relevant projects¶

btgym: is an OpenAI Gym-compatible environment for

backtrader backtesting/trading library, designed to provide gym-integrated framework for running reinforcement learning experiments in [close to] real world algorithmic trading environments.

gym: A toolkit for developing and comparing reinforcement learning algorithms.

qlib: Qlib provides a strong infrastructure to support quant research.

rllib: open-source library for reinforcement learning.

stable-baselines3: is a set of reliable implementations of reinforcement learning algorithms in PyTorch.

Developers¶

You are welcome to contribute features, examples and documentation or issues.

You can run the software tests typing pytest in the command line,

assuming that the folder tests is in the current working directory.

To refresh and build the documentation:

pytest tests/notebooks

sphinx-apidoc -f -o docs/source tradingenv

cd docs

make clean

make html